By Gene Hughson

Two of my favorite “bumper sticker philosophies” (i.e. short, pithy, and incredibly simplistic sayings) are “the simplest thing that could possibly work” and YAGNI. Avoiding unnecessary complexity and unneeded features are good ideas at first glance. The problem is determining what is unnecessary and unneeded. Just meeting the functional requirements is unlikely to be sufficient.

Software quality attributes , also known as quality of service requirements, must be accounted for. It’s not just a matter of what must be done but also how it is to be done. Security, in particular, is a vital consideration that should be baked in from the start rather than bolted on at some later date.

Ed Featherston’s Cloud Computing Journal article, “It’s 11 PM – Do You Know Where Your Data Is?”, illustrates what happens when consideration of security is deferred or ignored altogether. In the article, Ed recounts picking up a rental car and discovering that it has built-in Bluetooth integration for his cell phone, which he finds a “wonderful convenience for making calls while driving in the car”. Unfortunately, he also discovers that his contact information was cached by that integration:

“The next time I got the prompt from my phone about sharing the data with my rental car, I said, No, expecting the list of numbers to be no longer available on the in-dash display. Much to my surprise and dismay, the contact information I had previously shared was still available on the in-dash display. As I searched through the menu on the in dash display, I found a list of approximately fifteen different phones (including mine) that had been bound to the Bluetooth (which makes sense, it was after all a rental car). Once I deleted my phone from the list, all the contact information I had shared went away.”

One would hope that deleting the phone from the list actually deletes the information, rather than just marking it as deleted.

It really matters little whether the Bluetooth integration’s data storage was due to a deliberate decision to ignore security and privacy or to the fact that the need had not yet “emerged”. Regardless of the cause, fifteen people were put at risk of having their data compromised. When fifteen is multiplied by the number of rental cars with this particular implementation, you end up with a lot of risk. That risk was both foreseeable and avoidable.

Security and privacy have been increasingly prominent issues over the last few years. Waiting to implement security until it’s proven to be a problem may well be too late. Customers are unlikely to be happy about data leaks. Telling them that it’s their fault since they trusted you is probably not going to keep them around.

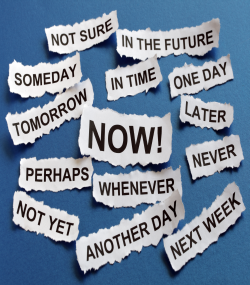

Deferring a decision to the last responsible moment means risking a deferral past the last responsible moment. When you have enough information to make a decision and you know that it needs to be made, there’s little to be gained by waiting longer. Deliberate design tends to work better and constrain less than quick fixes made under pressure. Quality is only expensive prior to the first incident caused by its lack.